There are a number of common problems with object detection. For example, can your object detector detect people and horses in the following image?

What if the same image is rotated by 90 degrees? Can it detect people and horses now?

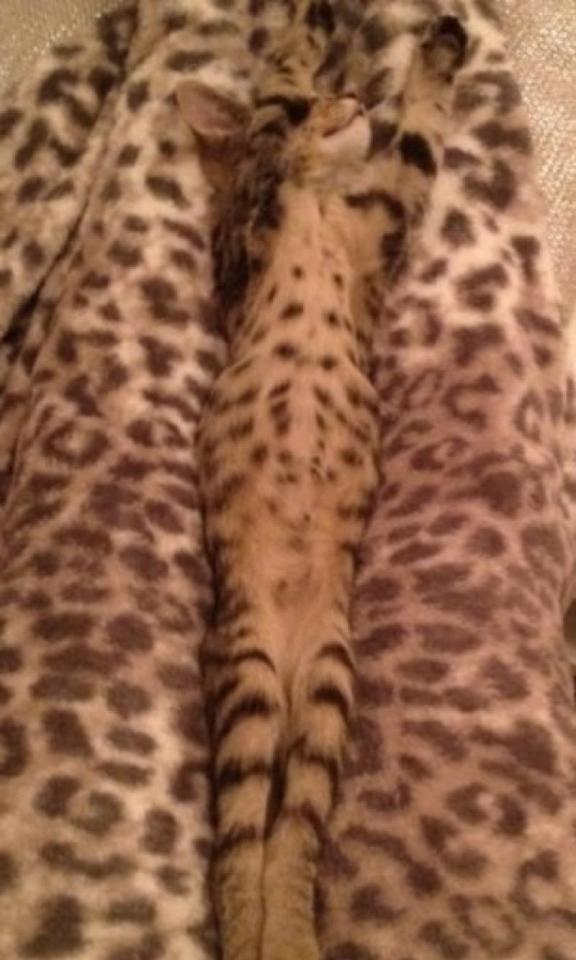

Or a cat in these images?

We have come a long way in the advancement of computer vision. Object detection algorithms using artificial intelligence (AI) have outperformed humans in certain tasks.

But why is it still a challenge to detect a person if the image is rotated 90 degrees, a cat is lying in an uncommon position, or an object is only partially visible?

A lot of models have been created for object detection and classification since AlexNet in 2012, and they are getting better in terms of accuracy and efficiency. However, most of the models are trained and tested in ideal scenarios.

In reality, the scenario where these models are used are not always ideal: the background may be cluttered, the object may be deformed, or perhaps occluded.

Take the example of the images of the cat below. Any object detector trained to detect a cat will, without failure, detect the cat in the image on the left. But for the image on the right, most detectors may fail to detect the cat.

Tasks that are considered trivial for humans are certainly a challenge in computer vision. It is easy for us humans to identify a person, regardless of the image orientation, or a cat in different poses, or a cup viewed from any angle.

Let’s take a look at some common problems with object detection.

6 Problems with Object Detection

1. Viewpoint Variation

An object viewed from different angles may look completely different. Take the example of a simple cup (referring to the images below).

The first image, showing a top view of a cup with black coffee in it looks completely different from the second image with a side and top view of a cup holding a cappuccino, and the third image with a side view of the cup.

This is one of the challenges with object detection because most detectors are trained with images only from a particular viewpoint.

2. Deformation

Many objects of interest are not rigid bodies and can be deformed in extreme ways. As an example, let’s look at images below of yogis in different positions.

If the object detector is trained to detect a person with training that only included a person sitting, standing, or walking, it might not be able to detect people in these images, as the features in these images may not match the ones that it learned about during training.

3. Occlusion

The objects of interest can be occluded. Sometimes only a small portion of an object (as little as a few pixels) may be visible.

For example, in the above image, the object (cup) is occluded by the person holding the cup. When we see only part of an object, in most cases, we can instantly identify what it is. Object detectors, however, do not perform in the same way.

Another example of occlusion in images is where a person is holding a mobile phone. It is a challenge for object detectors to detect mobile phones in these images:

4. Illumination Conditions

The effects of illumination are drastic on the pixel level. Objects exhibit different colors under different illumination conditions.

For example, an outdoor surveillance camera is exposed to different lighting conditions throughout the day, including bright daylight, evening, and night light.

An image of a pedestrian looks different in these varying illuminations. This affects the capability of the detector to detect objects robustly.

5.Cluttered or Textured Background

The objects of interest may blend into the background, making them hard to identify. For example, the cat and dog images below are camouflaged with the rug they are sitting or lying on. In these cases, object detectors will face challenges detecting cats and dogs.

6. Intra-Class Variation

An object of interest can often be relatively broad, such as a house. There are many different types of these objects, and each will have its own distinct appearance. All the images below are of different types of houses.

A good detector must be robust enough to detect the cross-product of all these variations, while also maintaining sensitivity to the inter-class variations.

Solving These Problems

To create a robust object detector that can overcome these common problems with object detection, ensure that there is a good variation of training data. Include different viewpoints, illumination conditions, and objects in different backgrounds.

If you cannot find real-world training data with all these variations, then use data augmentation techniques to synthesize the data you need.